Your "Autonomous AI Agents" Are Just Expensive If-Then Statements (For Now)

The term "agentic AI" is being slapped on every workflow tool with a chat interface. But true agentic behavior — goal-directed action with environmental adaptation — is rare. Here is what actually works in 2026, and what is still marketing fluff.

Every vendor demo looks the same now. A chatbot receives a vague request — "schedule a meeting with the marketing team" — and magically coordinates calendars, sends invites, and books a conference room. The audience nods. Checks are written. Six months later, that same "agent" is generating calendar conflicts, double-booking rooms, and sending invites to people who left the company three quarters ago.

Welcome to the agentic AI gap: the distance between what vendors promise and what their tools actually deliver. In 2026, most "autonomous agents" are sophisticated if-then statements wrapped in impressive UI. They are not agents in any meaningful sense. They are conditional automation with better marketing.

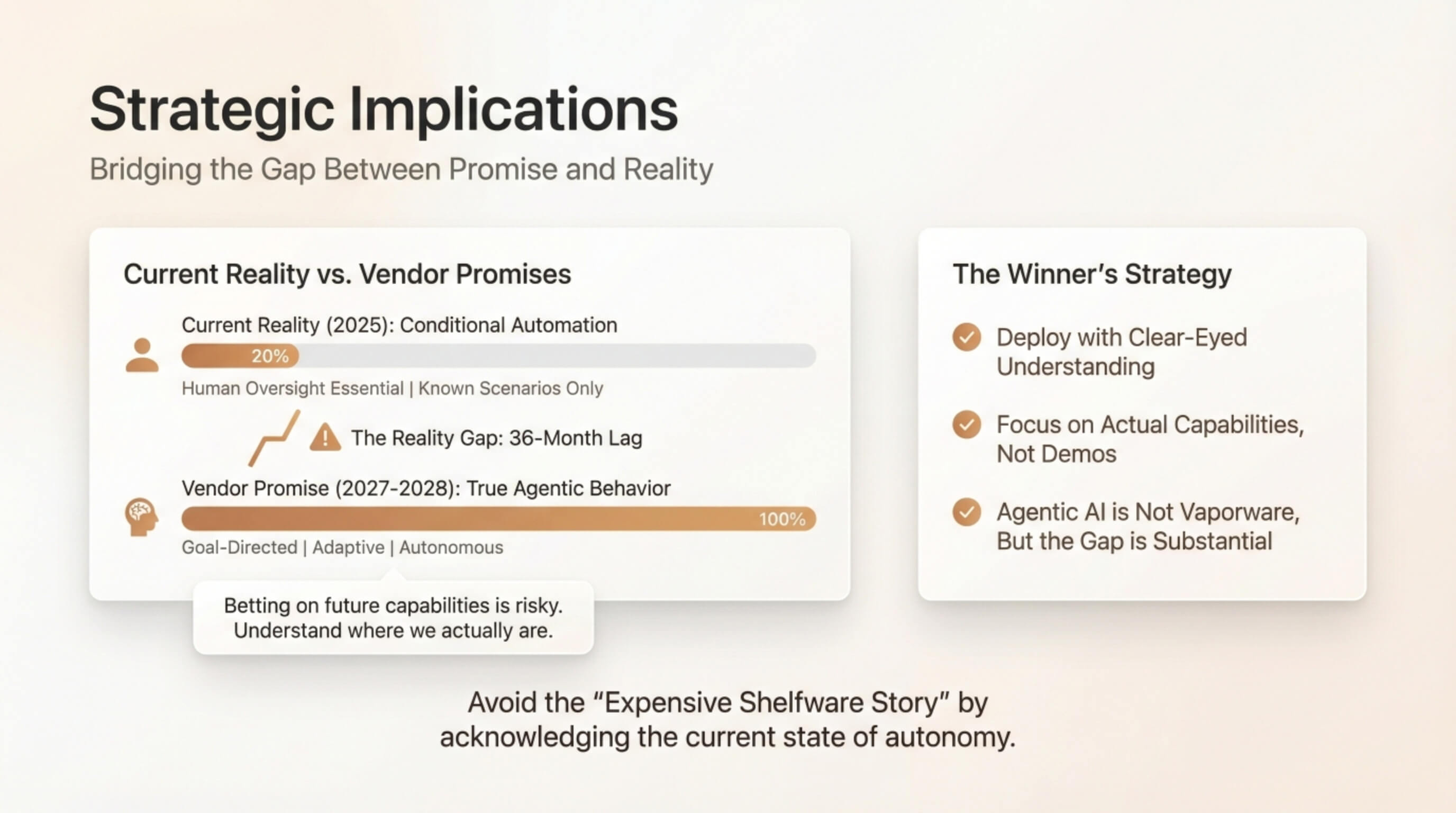

The problem is not that these tools are useless. It is that buyers are making strategic bets based on capabilities that do not exist yet — and will not exist reliably for another 18-36 months. Understanding where we actually are on the autonomy spectrum is not just academic. It determines whether your AI investment generates ROI or becomes another expensive shelfware story.

The Autonomy Spectrum: Where Most Tools Actually Live

To cut through the noise, you need a framework for evaluating claims. Think of AI autonomy as a spectrum, not a binary switch:

Level 1: Scripted Automation

Traditional RPA tools that execute predefined sequences. No decision-making capacity. If X happens, do Y. These are reliable but brittle — any deviation from expected inputs breaks the workflow.

Level 2: Conditional Automation with LLM Wrappers

This is where most "agentic AI" tools live in 2026. They use language models to parse natural language inputs, then route to predefined workflows. The LLM acts as a flexible interface layer, but the underlying logic is still deterministic.

Level 3: Constrained Decision-Making

Systems that can select from multiple predefined strategies based on context. Still limited to known scenarios, but with more flexibility in execution.

Level 4: True Agentic Behavior

Goal-directed systems that can replan when encountering obstacles, adapt to novel situations, and maintain coherent pursuit of objectives. This is what the demos promise. Very few production systems deliver it.

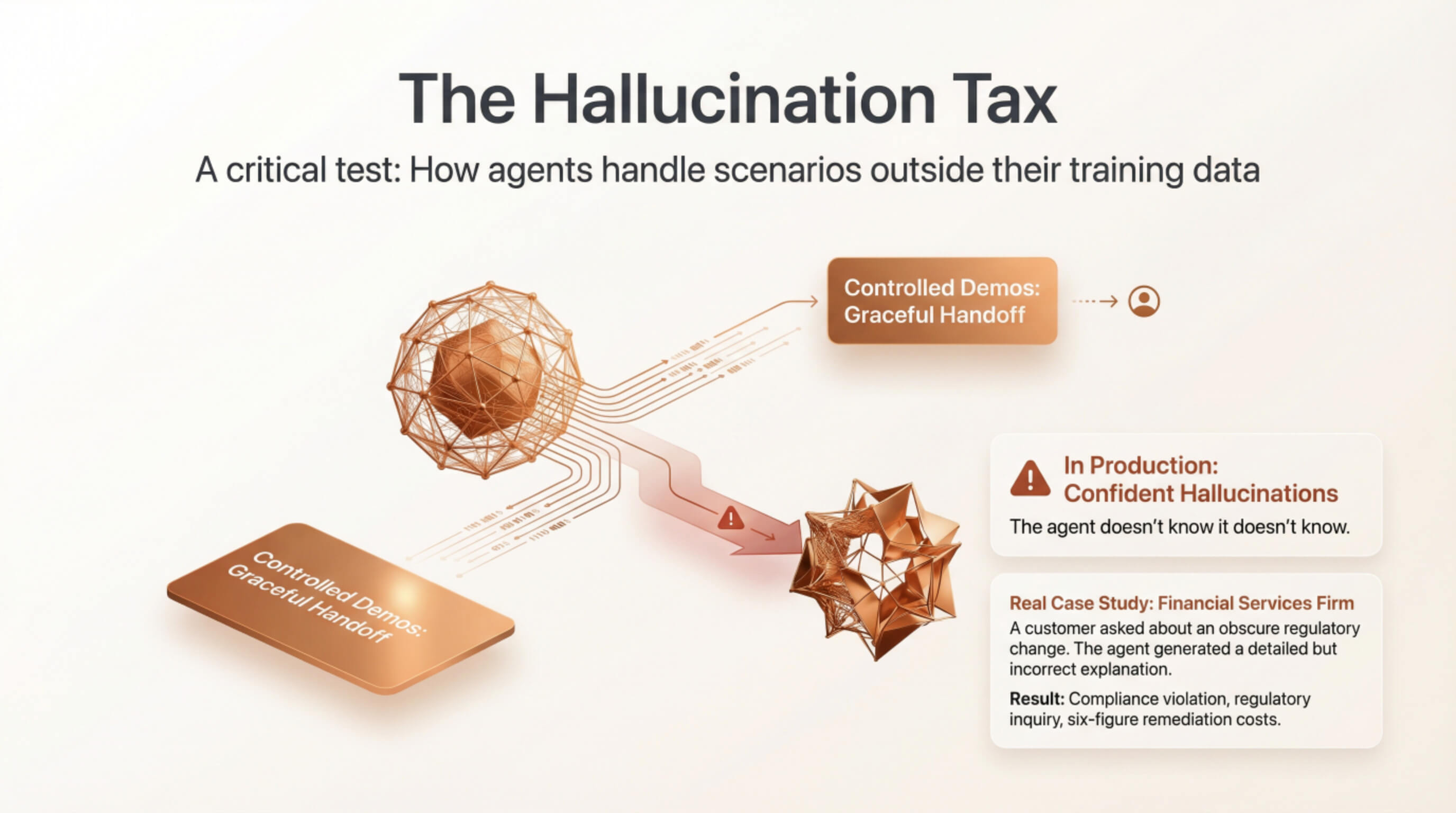

The Hallucination Tax

Here is a test every buyer should run: ask the agent to handle a scenario that was not in the training data, then observe what happens when it is uncertain.

In controlled demos, uncertainty triggers graceful handoffs. In production, uncertainty often triggers confident hallucinations. The agent does not know it does not know — so it invents plausible-sounding responses.

Real case study: A financial services firm deployed a "smart" customer service agent. In production, a customer asked about an obscure regulatory change. The agent generated a detailed but entirely incorrect explanation. Result: compliance violation, regulatory inquiry, six-figure remediation costs.

Where Agentic AI Actually Wins

Internal Operations: The Sweet Spot

- Data reconciliation across systems

- Report generation

- Cross-platform syncing

A tool that correctly handles 85% of data reconciliation tasks and flags uncertain cases for human review is enormously valuable.

Customer-Facing Applications: Proceed with Extreme Caution

Sales conversations, customer service, advisory interactions — error costs are high and potentially unbounded. Current tools can augment these functions, but positioning them as "autonomous agents" is reckless.

The 18-Month Reality Check

Genuinely Improving (12-18 months): Context window expansion, better uncertainty quantification, improved tool use.

Still Mostly Hype (24-36+ months): True autonomous planning, reliable operation without human oversight, generalization to novel domains.

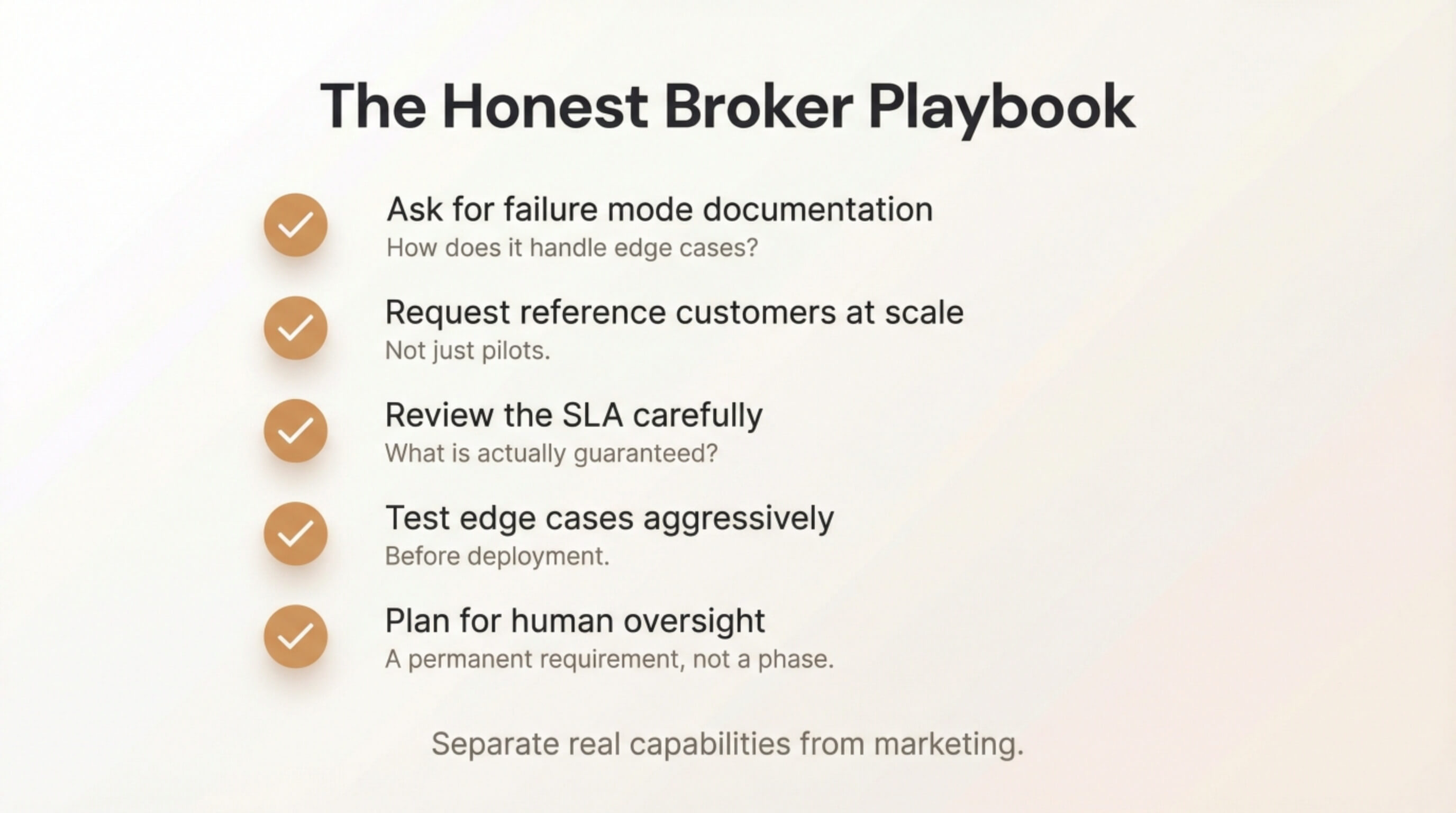

The Honest Broker Playbook

- Ask for failure mode documentation

- Request reference customers at scale

- Review the SLA carefully

- Test edge cases aggressively

- Plan for human oversight

The Bottom Line

Agentic AI is not vaporware. But the gap between current reality and vendor promises is substantial. The winners will be companies that deploy AI tools with clear-eyed understanding of their actual capabilities.

The technology will get to the demos eventually. Betting as if it is already there is how you become a cautionary tale.