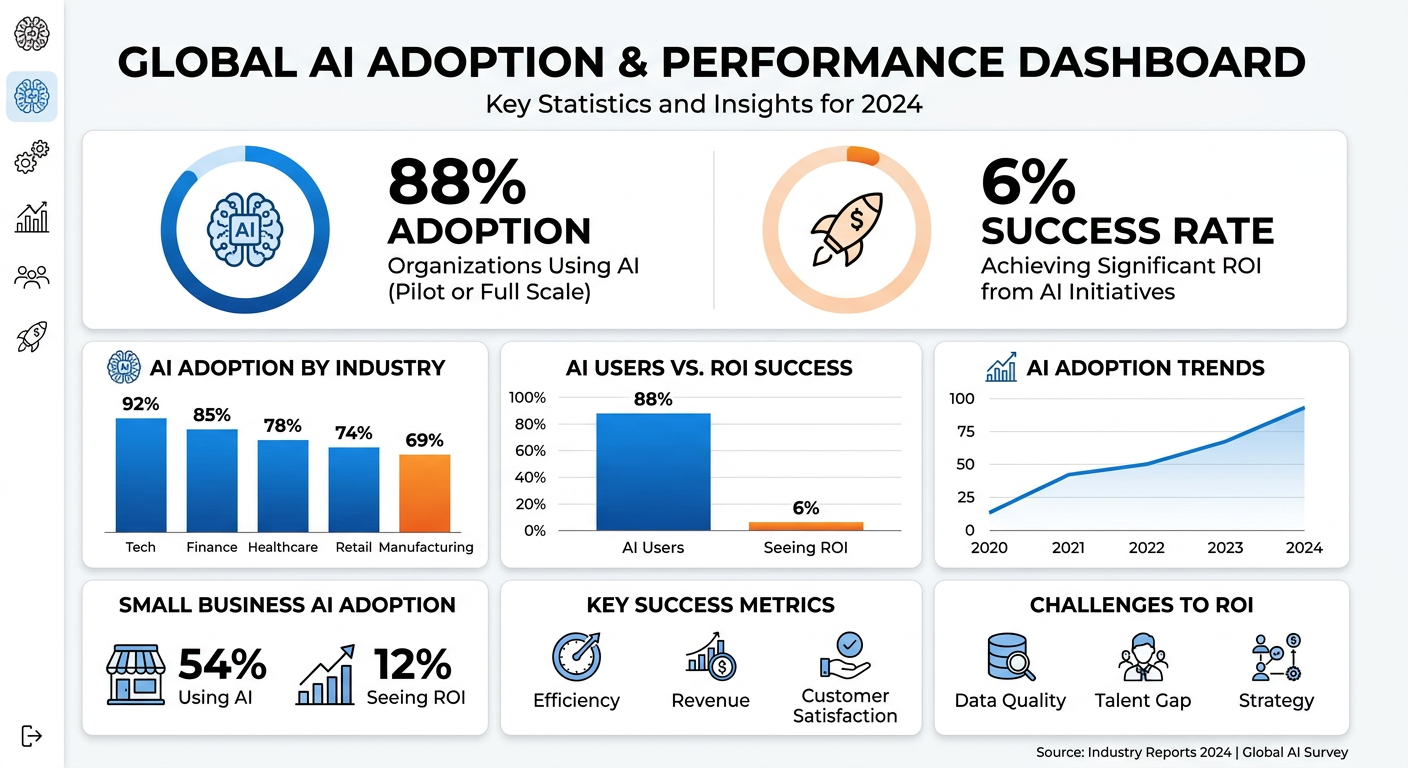

Why 88% of Small Businesses Fail at AI (And How to Join the 6% That Actually See Results)

Here's a statistic that should stop every small business owner in their tracks: 88% of small businesses are now using AI in some capacity, yet a mere 6% report seeing meaningful, measurable results from their investments. The remaining 82%? They're stuck in what industry analysts call "pilot purgatory"—spending money, experimenting with tools, but never achieving the transformational outcomes that justified the hype.

This isn't a technology problem. The AI models available today are more capable than ever. The issue is implementation—and a fundamental misunderstanding of what it takes to move from AI experimentation to AI transformation.

The Brutal Reality of AI Implementation

The numbers paint a sobering picture. According to recent research from MIT's NANDA initiative analyzing over $30-40 billion in enterprise AI investments, 70-85% of AI projects fail to meet their objectives or get shelved entirely. Gartner predicts that 30% of generative AI projects will be abandoned after the proof-of-concept phase by the end of 2025. Perhaps most damning: only 23% of enterprises can actually quantify AI's impact with hard data.

But here's what makes these statistics particularly dangerous for small businesses: while large enterprises can absorb failed AI experiments as "learning experiences," small businesses don't have that luxury. A $20,000 AI investment that delivers zero ROI isn't a lesson—it's a threat to survival.

Why Most AI Implementations Fail: The Five Critical Patterns

After analyzing hundreds of AI deployments across small and medium businesses, five distinct failure patterns emerge. Understanding these isn't academic—it's the difference between joining the 6% who succeed or the 88% who don't.

1. The Pilot Trap: Experimentation Without Commitment

The most common failure mode is treating AI as a science experiment rather than a business transformation. Companies launch pilots with vague objectives like "explore AI capabilities" or "test ChatGPT for content." Three months later, they have an impressive demo but no measurable business impact.

MIT's research found that 95% of enterprise AI pilots deliver zero measurable ROI. The reason? Pilots are designed as technology demonstrations, not production system prototypes. A pilot that achieves 90% accuracy on test data might drop to 60% when exposed to real-world data variability.

The Fix: Design every pilot as a production rehearsal from day one. Establish production readiness criteria before the pilot starts—not after. Define specific, measurable business outcomes: "Reduce customer service response time by 40%" or "Cut content production costs by $3,000/month." If you can't measure it, you can't manage it.

2. The Tool-First Mindset: Technology Before Strategy

Small businesses often fall into the trap of buying AI tools before understanding their actual needs. They see competitors using ChatGPT, hear about a new AI CRM, or get pitched by a vendor promising miracles—and they buy before strategizing.

Research analyzing 200 B2B AI deployments found that 19% of failures stem directly from tool-first thinking—attempting to automate tasks requiring deep expertise that AI cannot replicate. A real failure case: a startup tried to use AI to write entire sales proposals from scratch. The result? Generic proposals that clients rejected, delivering -18% ROI. The corrected approach—AI drafts outlines, humans add expertise, AI polishes language—delivered +240% ROI.

The Fix: Start with business problems, not AI solutions. Map your highest-friction processes. Identify where human judgment adds value versus where automation can help. Then—and only then—select tools that match your specific workflow requirements.

3. The Data Readiness Gap: Garbage In, Garbage Out

Here's a truth that AI vendors rarely emphasize: 85% of IT professionals confirm that AI outputs are only as good as data inputs. Yet 66% of SMBs lack proper data management infrastructure. They're trying to build AI on foundations of sand.

The data readiness gap manifests in multiple ways: inconsistent data formats across systems, incomplete historical records, siloed information that AI can't access, and poor data hygiene that produces unreliable outputs.

The Fix: Before any AI implementation, conduct a comprehensive data audit. Clean, standardize, and centralize your critical business data. This isn't glamorous work, but it's the difference between AI that hallucinates and AI that delivers.

4. The Skills and Change Management Deficit

AI implementation is only 10% algorithms, 20% data and technology, and 70% people, processes, and cultural transformation—what BCG calls the "10-20-70 principle." Yet most small businesses spend 90% of their AI budget on software and virtually nothing on training.

The research is clear: 46% of business leaders cite skills and training gaps as the primary barrier to AI adoption. Around 42% of companies face internal resistance to change. When employees fear AI will replace them—or simply don't understand how to use new tools effectively—adoption stalls at single-digit percentages.

The Fix: Successful AI adopters spend 70% of their budget on people and process (training, workflow reengineering, change management) and only 30% on technology. Invest in comprehensive training programs. Frame AI as augmentation, not replacement. Create internal champions who can guide their colleagues through the transition.

5. The Wrong Use Case Selection

Not every business problem benefits from AI. Research analyzing failed AI projects found that 38% of failures stem from wrong use case selection—attempting to automate tasks requiring deep expertise, nuanced judgment, or complex contextual understanding that current AI cannot reliably provide.

Meanwhile, high-ROI use cases often get ignored because they're less visible. MIT research found that over half of AI budgets go to sales and marketing applications, but back-office automation delivers the highest returns with 6-18 month payback periods.

The Fix: Focus initial AI investments on high-volume, repetitive tasks with clear success metrics. Customer service automation, content drafting, data entry, and scheduling are proven winners. Save the complex, judgment-intensive applications for later stages when you've built organizational AI maturity.

The Execution Gap: Why Knowing Isn't Doing

Here's the uncomfortable truth: most small business owners already know everything we've covered. They understand that pilots need clear objectives. They know data quality matters. They've heard about change management. Yet they still fail.

The gap isn't knowledge—it's execution. Specifically, three execution failures doom most AI initiatives:

Failure to Commit Beyond the Pilot

Companies allocate budget for pilot programs but not for production deployment. They declare victory when the demo works, then discover that production requires 99.9% uptime, security frameworks, integration with existing systems, user training programs, support infrastructure, and continuous monitoring—none of which were budgeted.

Research shows that only 16% of AI initiatives achieve scale beyond the pilot stage. The rest die in "pilot purgatory"—working demos that never become working businesses.

Failure to Measure What Matters

Only 23% of enterprises can quantify AI's business impact with hard data. The rest rely on qualitative feedback, anecdotal evidence, or gut feeling. When budget reviews come, they can't defend their AI investments against CFO scrutiny.

Critical success metrics to track from Day 1:

- Task completion time (baseline vs. AI-assisted)

- Error rate or rework frequency

- User adoption rate (% of eligible users actively engaging)

- Direct cost or revenue impact where quantifiable

- Escalation rate (human override frequency)

Failure to Design for Learning

The organizations achieving 10.3x returns on AI investments share one characteristic: their systems learn and improve over time. They capture feedback, adapt to context, and continuously refine based on real-world performance.

Most small businesses deploy static AI tools that don't improve. Six months later, they're using the same tool the same way, wondering why results haven't improved. Meanwhile, competitors with learning-capable systems have left them behind.

The 90-Day Roadmap: From Failure to Success

Small businesses don't need enterprise-scale transformation programs. They need focused, disciplined execution. Here's a proven 90-day framework that has helped hundreds of SMBs move from the 88% to the 6%:

Days 1-30: Discovery and Foundation

Week 1-2: Data Audit and Quality Assessment

- Inventory all business data sources

- Assess data quality, completeness, and accessibility

- Identify data silos and integration requirements

- Clean and standardize priority datasets

Week 3-4: Use Case Identification

- Map current workflows and identify friction points

- Prioritize use cases by: volume of work, clarity of success metrics, feasibility of automation

- Select ONE pilot use case (not five)

- Define specific, measurable success criteria

Days 31-60: Planning and Preparation

Week 5-6: Vendor Selection and Architecture

- Evaluate AI solutions against specific use case requirements

- Prioritize vendors with proven SMB implementations

- Assess integration complexity with existing systems

- Verify data security and compliance capabilities

Week 7-8: Change Management and Training Design

- Identify internal AI champions

- Develop training program for affected employees

- Communicate AI strategy: augmentation, not replacement

- Create feedback mechanisms for continuous improvement

Days 61-90: Pilot and Validation

Week 9-10: Controlled Deployment

- Deploy to limited user group (50-200 users)

- Run parallel with existing workflows for comparison

- Instrument everything: usage, time savings, error rates, satisfaction

Week 11-12: Performance Analysis and Go/No-Go Decision

- Measure against success criteria defined in Week 4

- Collect qualitative feedback through structured interviews

- Make data-driven decision: scale, iterate, or abandon

- Document lessons learned for future implementations

Pilot Success Thresholds Before Scaling:

- 70%+ active adoption rate

- Measurable efficiency improvement of 15%+

- No unresolved critical issues

- Documented production deployment plan

Real-World Success Patterns: What the 6% Do Differently

Research analyzing AI success stories reveals consistent patterns among the 6% who achieve meaningful ROI:

Pattern 1: Human-in-the-Loop Governance

Companies with human oversight mechanisms experience 4.2x fewer critical incidents. They don't blindly trust AI outputs—they verify, validate, and continuously refine. This isn't inefficiency; it's risk management that enables confident scaling.

Pattern 2: Training Investment Correlation

Organizations investing 25%+ of their AI budget in training achieve 2.1x higher ROI than those focusing exclusively on technology. This includes formal training programs, internal documentation, and ongoing support structures.

Pattern 3: Vendor Solution Advantage

MIT data shows vendor solutions succeed 67% of the time versus 33% for internal builds. Small businesses should buy, not build, unless they have unique proprietary requirements that off-the-shelf solutions cannot address.

Pattern 4: Smaller Initial Scope Wins

Counterintuitively, projects with smaller initial budgets (<$15K) achieved 2.1x higher ROI than large-scale deployments. Start small, prove value, then scale—rather than betting big on unproven approaches.

Pattern 5: Back-Office Focus

Companies focusing initial AI investments on back-office automation (operations, admin, support) see faster returns than those chasing customer-facing applications. The work is less glamorous but the ROI is more reliable.

Actionable Frameworks for Immediate Implementation

Theory without action is useless. Here are three frameworks you can apply immediately:

The AI Opportunity Matrix

Map potential AI use cases across two dimensions: Business Impact (High/Low) and Implementation Complexity (High/Low). Start with High Impact/Low Complexity quadrant. These are your quick wins that build organizational confidence and fund future initiatives.

| Low Complexity | High Complexity | |

|---|---|---|

| High Impact | START HERE Content drafting, email automation, meeting transcription |

SECOND PHASE Customer service automation, predictive analytics |

| Low Impact | QUICK TESTS Image generation, simple chatbots |

AVOID Complex custom builds, unproven applications |

The 70-20-10 Budget Rule

Allocate AI investment across three categories:

- 70% People & Process: Training, change management, workflow redesign, ongoing support

- 20% Technology: Software licenses, infrastructure, integration

- 10% Contingency: Unexpected requirements, additional training, scope adjustments

The Monthly Health Check

Every month, ask these five questions:

- Are adoption rates increasing or declining?

- What percentage of outputs require human correction?

- Are we measuring direct business impact?

- Have we captured and incorporated user feedback?

- Do we have a clear path to scale or a decision to pivot?

The Cost of Waiting vs. The Cost of Failing

There's a final consideration small business owners must confront. The statistics show that 96% of small business owners plan to adopt AI in the coming years. The gap between AI adopters and non-adopters is widening rapidly. Growing SMBs are 1.8x more likely to invest in AI than declining ones.

But the cost of failed implementation is equally real. 42% of companies have abandoned AI initiatives. 51% of organizations using AI have experienced negative consequences from poor implementation. The difference between these groups isn't luck—it's approach.

The businesses thriving with AI haven't found magical technology. They've executed systematically: clean data foundations, clear use case selection, disciplined change management, and continuous measurement. They treat AI as business transformation, not technology procurement.

That's the path from the 88% to the 6%. It's not about having better AI. It's about being better at implementing AI.

Work With Versalence

We help small businesses navigate the transition from public AI to private, sovereign AI systems:

- AI Infrastructure Assessment — Evaluate systems and identify high-ROI opportunities

- Custom Deployment AI Services — Enterprise grade platform development and deployment

- RAG Implementation — Vector & Graph DB to elevate your AI's ability to provide precise an accurate results

📧 versalence.ai/contact.html | sales@versalence.ai