Graph Rag Implementation: A Deep Dive for Thursday

Introduction - Hook with real problem

Imagine a large pharmaceutical company, PharmaCorp, grappling with a massive internal knowledge base: decades of research papers, clinical trial data, regulatory filings, and internal memos. Their scientists spend an inordinate amount of time searching for relevant information, often reinventing the wheel or missing crucial connections between seemingly disparate findings. Simple keyword search is insufficient, and even advanced semantic search struggles to capture the complex relationships and dependencies within the data. PharmaCorp needs a solution that can not only retrieve relevant documents but also understand and leverage the inherent structure of their knowledge graph. In 2026, Graph RAG (Retrieval Augmented Generation) is no longer an experimental technique, but a critical component of enterprise knowledge management, and PharmaCorp is exploring how to implement it effectively. This blog post dives into what actually works in Graph RAG in 2026, focusing on practical implementation and addressing the specific challenges faced by organizations like PharmaCorp.

The Current Landscape - What's happening in 2026

By 2026, Graph RAG has matured significantly. The initial hype has subsided, and practical implementations are driving the field. Several key trends are shaping the landscape:

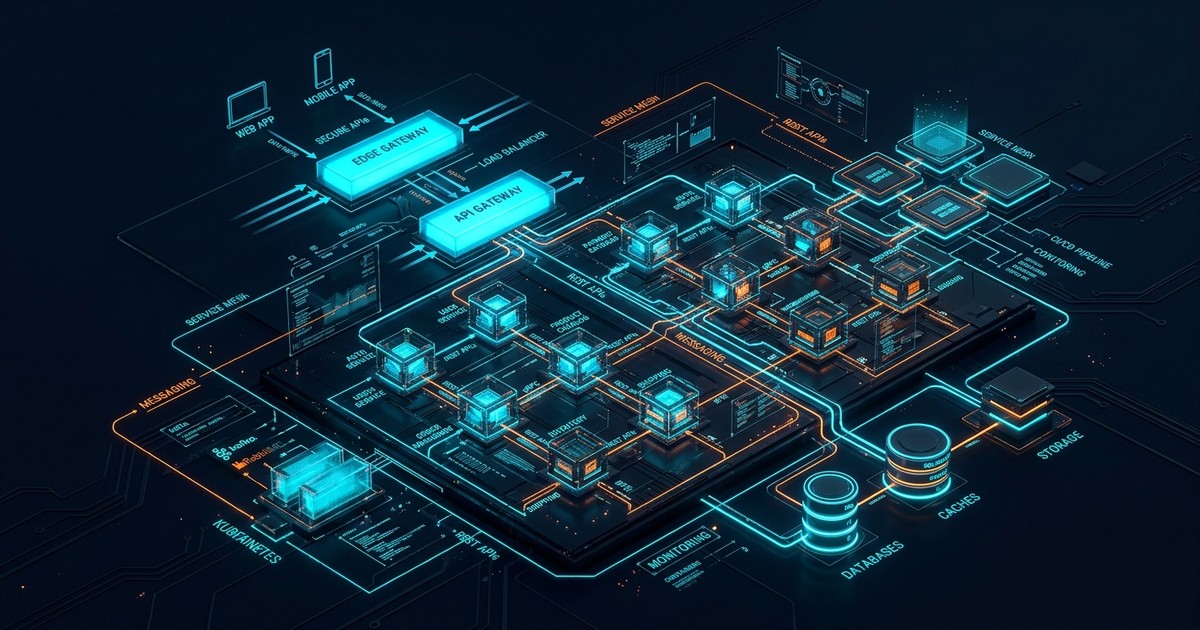

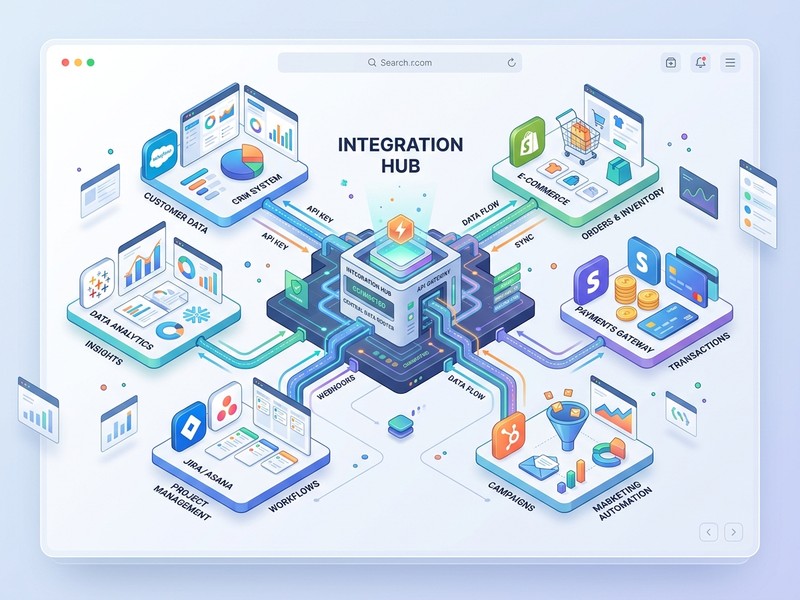

- Hybrid Architectures: Pure graph-based retrieval is rare. Most successful implementations combine graph databases (Neo4j, TigerGraph, JanusGraph) with vector databases (Pinecone, Weaviate, Qdrant) and traditional search engines (Elasticsearch, Solr). The graph database provides the structural context, while vector databases handle semantic similarity.

- Advanced Embedding Techniques: Simple document embeddings are no longer sufficient. Techniques like graph neural network (GNN) embeddings and knowledge graph embeddings (KGEs) are used to capture the relationships between entities and concepts within the graph. Fine-tuning these embeddings on domain-specific knowledge is crucial.

- Contextualized LLM Integration: Large Language Models (LLMs) are not just used for generation; they are integrated into the retrieval process. LLMs are used for query understanding, node ranking, and relationship extraction. Techniques like chain-of-thought reasoning are used to guide the LLM to explore the graph effectively.

- Automated Graph Construction: Manual graph construction is time-consuming and error-prone. Automated methods based on natural language processing (NLP) and information extraction are becoming increasingly prevalent. However, human-in-the-loop validation remains essential for ensuring accuracy.

- Explainability and Auditability: As Graph RAG systems become more complex, explainability and auditability are paramount. Users need to understand why a particular answer was generated and be able to trace the reasoning process back to the original data sources.

Deep Dive: Core Concepts - Frameworks and analysis

The core of Graph RAG lies in combining graph-based knowledge representation with LLM-powered generation. Let's break down the key components:

-

Knowledge Graph Construction: This involves identifying entities (e.g., diseases, drugs, genes) and relationships (e.g., "treats", "interacts with", "is a subtype of") from unstructured data sources. Advanced NLP techniques like named entity recognition (NER), relation extraction (RE), and coreference resolution are used to automate this process. The resulting graph is stored in a graph database.

-

Graph Traversal and Retrieval: When a user poses a question, the system first identifies the relevant entities in the query. Then, it traverses the graph to find related nodes and edges. This traversal can be guided by various algorithms, such as shortest path, breadth-first search, and PageRank. The retrieved subgraph represents the context for the LLM.

-

Context Augmentation: The retrieved subgraph is then augmented with additional information, such as entity descriptions, attribute values, and relevant documents. This augmented context is then fed into the LLM.

-

LLM Generation: The LLM uses the augmented context to generate a coherent and informative answer to the user's question. The LLM can also be used to generate explanations for its reasoning process.

Frameworks: Several frameworks facilitate Graph RAG implementation:

- LangChain: A popular framework for building LLM-powered applications. It provides modules for graph data loading, graph traversal, and LLM integration.

- LlamaIndex: Another framework focused on data indexing and retrieval. It supports various data sources, including graph databases.

- Haystack: A framework for building search pipelines. It can be used to integrate graph databases into a search workflow.

Analysis: The performance of a Graph RAG system depends on several factors:

- Graph Quality: The accuracy and completeness of the knowledge graph are crucial. Errors in the graph can lead to incorrect answers.

- Traversal Strategy: The choice of traversal algorithm can significantly impact the relevance of the retrieved context.

- LLM Capabilities: The ability of the LLM to understand the context and generate coherent answers is essential.

- Embedding Quality: High-quality embeddings are needed for accurately retrieving similar nodes and edges.

Comparison and Trade-offs - Tables with pros/cons

Here's a comparison of different graph database options:

| Feature | Neo4j | TigerGraph | JanusGraph |

|---|---|---|---|

| Data Model | Property Graph | Property Graph | Property Graph |

| Scalability | Scales well, mature | Massively parallel, excellent for scale | Designed for distributed environments |

| Query Language | Cypher | GSQL | Gremlin |

| Pros | Mature ecosystem, easy to use | High performance, complex analytics | Open source, highly customizable |

| Cons | Commercial license required for scale | Steeper learning curve | Can be complex to set up and manage |

And a comparison of different embedding techniques:

| Technique | Description | Pros | Cons | Use Case |

|---|---|---|---|---|

| Node2Vec | Learns node embeddings based on random walks. | Simple, scalable, captures node proximity. | Doesn't capture semantic meaning of node labels or properties. | General-purpose graph embedding. |

| Graph Convolutional Networks (GCNs) | Aggregates information from neighboring nodes using convolutional filters. | Captures node features and graph structure. | Requires labeled data for training. | Node classification, link prediction. |

| Knowledge Graph Embeddings (KGEs) | Learns embeddings for entities and relations in a knowledge graph. | Captures semantic relationships between entities. | Requires a well-defined knowledge graph. | Relation prediction, knowledge graph completion. |

Implementation Framework - Step-by-step guide

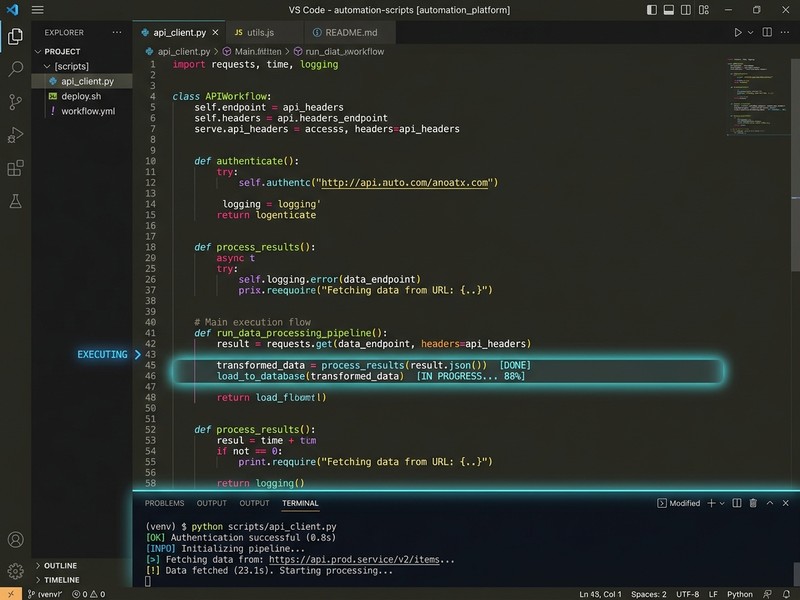

Here's a step-by-step guide to implementing Graph RAG:

- Data Ingestion and Preprocessing: Collect and clean your data. Identify the relevant entities and relationships.

- Graph Schema Definition: Define the schema of your knowledge graph, including the entity types, relationship types, and properties.

- Graph Construction: Use NLP techniques to extract entities and relationships from your data. Populate the graph database with the extracted data.

- Embedding Generation: Generate embeddings for the nodes and edges in your graph. Fine-tune the embeddings on your domain-specific data.

- Query Understanding: Use an LLM to understand the user's query and identify the relevant entities.

- Graph Traversal: Traverse the graph to find related nodes and edges.

- Context Augmentation: Augment the retrieved subgraph with additional information.

- LLM Generation: Use an LLM to generate an answer to the user's question.

- Evaluation and Refinement: Evaluate the performance of your system and refine the graph, embeddings, and LLM parameters.

Specific Implementation Advice:

- Use a hybrid approach: Combine graph databases with vector databases for optimal performance.

- Fine-tune your embeddings: Fine-tune your embeddings on your domain-specific data to improve accuracy.

- Use chain-of-thought reasoning: Guide the LLM to explore the graph effectively.

- Implement explainability: Provide users with explanations for the generated answers.

- Automate graph construction: Use NLP techniques to automate the process of extracting entities and relationships.

Decision Guide - How to choose

Choosing the right components for your Graph RAG system depends on your specific needs and constraints. Here's a decision framework:

| Factor | Options | Considerations |

|---|---|---|

| Graph Database | Neo4j, TigerGraph, JanusGraph | Neo4j: Mature ecosystem, easy to use, but commercial license for scale. TigerGraph: High performance, complex analytics, but steeper learning curve. JanusGraph: Open source, highly customizable, but can be complex to set up. Consider data volume, query complexity, and budget. |

| Embedding Technique | Node2Vec, GCNs, KGEs | Node2Vec: Simple, scalable, but doesn't capture semantic meaning. GCNs: Captures node features and graph structure, but requires labeled data. KGEs: Captures semantic relationships, but requires a well-defined knowledge graph. Consider the type of data you have and the relationships you want to capture. |

| LLM | GPT-4, Claude, Llama 3 | Consider the LLM's capabilities, cost, and API availability. Experiment with different LLMs to find the one that performs best on your task. Consider using smaller, fine-tuned LLMs for faster inference and lower cost. |

| Graph Construction Method | Manual, Automated (NLP), Hybrid | Manual: Accurate, but time-consuming. Automated: Fast, but may be inaccurate. Hybrid: Combines the benefits of both approaches. Consider the size and complexity of your data and the level of accuracy required. |

Case Study or Real Example

Consider a financial institution, FinCorp, using Graph RAG to detect fraudulent transactions. They build a knowledge graph representing customers, accounts, transactions, and relationships between them (e.g., "transferred to", "related to"). They use GCNs to embed nodes, capturing patterns of suspicious activity. When a new transaction occurs, the system retrieves the relevant subgraph and uses an LLM to assess the risk of fraud. The LLM considers the customer's transaction history, relationships to other customers, and any known fraudulent patterns in the graph. This allows FinCorp to identify and prevent fraudulent transactions more effectively than traditional rule-based systems. The explainability feature allows fraud investigators to quickly understand why a transaction was flagged as suspicious.

30-Day Action Checklist

Here's a 30-day action checklist to get started with Graph RAG:

Week 1: Planning and Setup

- [ ] Define your use case and identify the data sources.

- [ ] Choose a graph database and LLM.

- [ ] Set up your development environment.

Week 2: Data Ingestion and Graph Construction

- [ ] Ingest your data into the graph database.

- [ ] Implement NLP pipelines for entity and relation extraction.

- [ ] Validate the accuracy of the constructed graph.

Week 3: Embedding Generation and LLM Integration

- [ ] Generate embeddings for the nodes and edges in your graph.

- [ ] Integrate the LLM into your system.

- [ ] Implement query understanding and graph traversal logic.

Week 4: Evaluation and Refinement

- [ ] Evaluate the performance of your system on a set of test queries.

- [ ] Refine the graph, embeddings, and LLM parameters.

- [ ] Implement explainability features.

Bottom Line - Key takeaways

Graph RAG is a powerful technique for leveraging the structure of knowledge graphs to improve the accuracy and relevance of LLM-generated answers. Successful implementations require a hybrid architecture, advanced embedding techniques, contextualized LLM integration, and automated graph construction. Explainability and auditability are paramount. By following the implementation framework and decision guide outlined in this blog post, organizations can effectively implement Graph RAG and unlock the full potential of their knowledge assets.

Work With Versalence - CTA paragraph

Implementing a robust and scalable Graph RAG system requires deep expertise in graph databases, natural language processing, and large language models. Versalence specializes in building cutting-edge AI-powered solutions that leverage the latest advancements in Graph RAG. Our team of experienced engineers and data scientists can help you design, implement, and deploy a custom Graph RAG solution that meets your specific needs. From data ingestion and graph construction to embedding generation and LLM integration, we provide end-to-end support to ensure your success. Let us help you transform your data into actionable insights.

📧 versalence.ai/contact.html | sales@versalence.ai