Long-Term Memory MCP RAG: The Architecture for AI Agents That Actually Learn

Every conversation with an AI agent starts with a blank slate. Ask it to perform a task it completed yesterday, and it stares back with digital amnesia. This is the "agent forgetfulness" problem, and it is costing businesses millions in repeated work, lost context, and frustrated users.

The statistics paint a stark picture. According to 2025 industry research, agents without persistent memory experience up to 67% failure rates on multi-step tasks requiring contextual continuity. When an agent cannot remember what it learned from previous interactions, it repeats the same mistakes endlessly, treats returning users like strangers, and fails to build any semblance of expertise over time.

But a new architectural pattern is emerging that promises to change everything. By combining the Model Context Protocol (MCP) with long-term memory systems and Retrieval-Augmented Generation (RAG), we can build AI agents that actually learn, adapt, and improve with every interaction.

The Architecture of Forgetting

To understand why agents forget, we need to look at how most are built today. The standard pattern involves an LLM wrapped in a simple loop: receive input, process, generate output. Context exists only within the current conversation window. Once that session ends, everything evaporates.

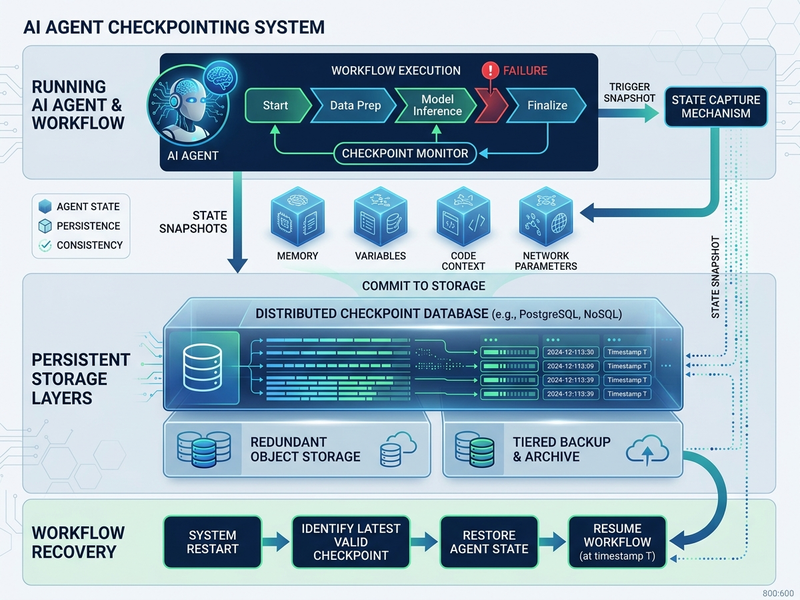

LangGraph, which has emerged as the dominant framework for building production agents in 2025, introduces checkpointing as a solution for short-term memory persistence. When configured with PostgresSaver or Redis backends, LangGraph can save state snapshots at every super-step. If a node fails mid-execution, the agent resumes from the last checkpoint rather than starting over.

But checkpointing alone is insufficient for true learning. It preserves workflow state within a single session but does not transfer knowledge between sessions or users. The agent still wakes up amnesiac every morning.

This is where the combination of MCP, vector memory stores, and RAG creates something transformative.

MCP: The Universal Connector

The Model Context Protocol, developed by Anthropic and now adopted by Google, OpenAI, and Microsoft, is best understood as a "USB-C port for AI applications." It standardizes how agents connect to external tools and data sources.

Before MCP, integrating an agent with a new tool required custom code for every combination. The M×N integration problem meant every AI application needed bespoke connections to every external system. MCP solves this by establishing a universal protocol based on JSON-RPC 2.0.

Once a service implements an MCP server, any MCP-compatible client can immediately understand and use its capabilities without additional integration work. This standardization has driven rapid adoption across the industry, with major platforms including Stripe, Vercel, and Cloudflare shipping MCP servers in 2025.

But MCP's true power emerges when combined with persistent memory systems.

LangGraph Checkpointing with External Knowledge Stores

Production-grade checkpointing in LangGraph supports multiple backends, each with distinct characteristics for different use cases.

PostgresSaver provides durable, strongly consistent storage ideal for production workloads. Redis offers sub-millisecond latency and holds 43% market share among AI agent developers according to 2025 Stack Overflow Survey data. DynamoDB plus S3 combinations provide intelligent size-based routing for AWS-native architectures, storing lightweight metadata in DynamoDB and large payloads in S3.

The checkpointing system saves state at every super-step, capturing not just the conversation history but also intermediate computation results, tool outputs, and execution context. When recovery is needed, the agent resumes precisely where it left off.

The breakthrough comes when we extend this pattern beyond session boundaries. By treating the checkpoint store as a queryable knowledge base, agents can search across previous executions, learn from similar past tasks, and build cumulative expertise.

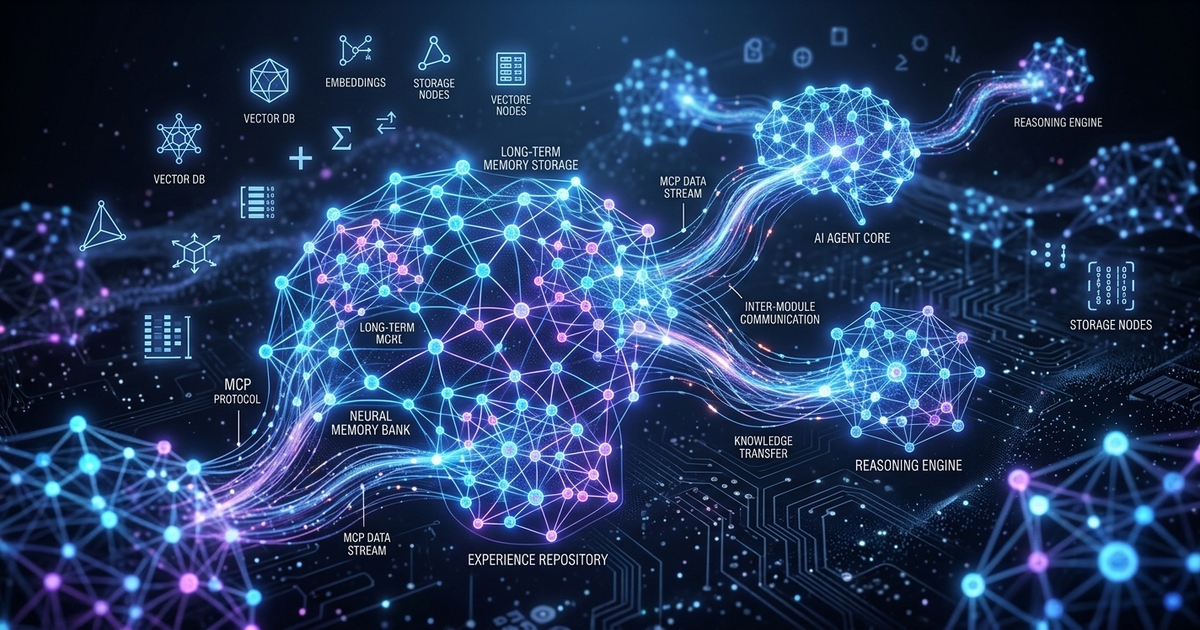

The Three-Layer Memory Architecture

A truly learning agent requires three distinct memory layers working in concert.

Short-term memory handles the immediate context window, managed through LangGraph's reducer-driven state schemas. These TypedDict structures define how state updates merge, preventing silent data loss during complex multi-agent interactions.

Long-term memory persists user preferences, learned facts, and accumulated knowledge across sessions. Implemented through vector databases like Pinecone, Weaviate, or Chroma, this layer enables semantic search retrieval of relevant context based on the current query.

External knowledge through RAG provides access to documents, databases, and knowledge bases beyond the model's training data. Retrieved dynamically based on semantic similarity, this layer gives agents expertise in specific domains without fine-tuning.

When combined, these layers create agents that remember who you are, recall what worked in similar past situations, and access vast knowledge repositories on demand.

Implementation: Building a Learning Agent

Let us examine how to implement this architecture in practice. We will build an agent that maintains persistent memory across sessions, learns from past interactions, and combines MCP tools with RAG for comprehensive knowledge access.

First, we configure the checkpointing infrastructure with PostgreSQL for durability.

from langgraph.checkpoint.postgres import PostgresSaver

from psycopg_pool import ConnectionPool

DB_URI = "postgresql://user:pass@host:5432/langgraph?sslmode=require"

pool = ConnectionPool(conninfo=DB_URI, max_size=10)

with pool.connection() as conn:

saver = PostgresSaver(conn)

saver.setup()Next, we define our state schema with explicit reducers controlling how updates merge.

from typing import Annotated, TypedDict

from operator import add

from langgraph.graph.message import add_messages

class AgentState(TypedDict):

messages: Annotated[list, add_messages]

user_preferences: dict

learned_patterns: list[str]

session_id: strFor long-term memory, we integrate with a vector store. Here is a production pattern using ChromaDB.

from langchain.vectorstores import Chroma

from langchain.embeddings import OpenAIEmbeddings

embeddings = OpenAIEmbeddings(model="text-embedding-3-small")

vector_store = Chroma(

collection_name="agent_memory",

embedding_function=embeddings,

persist_directory="./memory_db"

)

def store_memory(user_id: str, fact: str, importance: float = 1.0):

"""Store a learned fact with metadata for later retrieval."""

vector_store.add_texts(

texts=[fact],

metadatas=[{"user_id": user_id, "importance": importance, "timestamp": time.time()}]

)

def retrieve_relevant_memories(user_id: str, query: str, k: int = 5):

"""Fetch semantically similar past learnings."""

results = vector_store.similarity_search(

query=query,

k=k,

filter={"user_id": user_id}

)

return [r.page_content for r in results]Now we integrate MCP for tool access. This example connects to multiple MCP servers.

from mcp import ClientSession, StdioServerParameters

from mcp.client.stdio import stdio_client

# Configure MCP servers

filesystem_params = StdioServerParameters(

command="npx",

args=["-y", "@modelcontextprotocol/server-filesystem", "/path/to/allowed/dir"]

)

search_params = StdioServerParameters(

command="uvx",

args=["mcp-server-brave-search"]

)

async def with_mcp_tools():

async with stdio_client(filesystem_params) as (read1, write1), \

stdio_client(search_params) as (read2, write2):

async with ClientSession(read1, write1) as fs_session, \

ClientSession(read2, write2) as search_session:

# Initialize both servers

await fs_session.initialize()

await search_session.initialize()

# List available tools

fs_tools = await fs_session.list_tools()

search_tools = await search_session.list_tools()

# Combine all available tools

available_tools = fs_tools.tools + search_tools.toolsThe RAG layer integrates external knowledge through document indexing and retrieval.

from langchain.text_splitter import RecursiveCharacterTextSplitter

from langchain.document_loaders import DirectoryLoader

# Load and index documents

loader = DirectoryLoader("./knowledge_base/", glob="**/*.pdf")

docs = loader.load()

splitter = RecursiveCharacterTextSplitter(

chunk_size=1000,

chunk_overlap=200

)

chunks = splitter.split_documents(docs)

# Index in vector store

rag_store = Chroma.from_documents(

documents=chunks,

embedding=embeddings,

collection_name="knowledge_base"

)

def augment_with_knowledge(query: str) -> str:

"""Retrieve relevant documents and format as context."""

relevant_docs = rag_store.similarity_search(query, k=3)

context = "\n\n".join([d.page_content for d in relevant_docs])

return f"Relevant knowledge:\n{context}\n\nQuery: {query}"Putting It All Together

The complete agent orchestrates these layers in its execution loop. Before generating a response, it queries long-term memory for relevant past interactions, searches the RAG knowledge base for domain expertise, and invokes MCP tools when external actions are needed.

async def agent_node(state: AgentState):

user_id = state["session_id"]

current_query = state["messages"][-1].content

# Layer 1: Retrieve long-term memories

memories = retrieve_relevant_memories(user_id, current_query)

# Layer 2: Augment with RAG knowledge

knowledge_context = augment_with_knowledge(current_query)

# Layer 3: Build enriched prompt

enriched_prompt = f"""

Previous relevant interactions:

{memories}

{knowledge_context}

User preferences: {state.get('user_preferences', {})}

Respond to the user query using available tools if needed.

"""

# Generate response with tool access

response = await llm_with_tools.ainvoke(enriched_prompt)

# Store any new learnings

if response.tool_calls:

for call in response.tool_calls:

store_memory(

user_id,

f"Tool {call['name']} used for: {current_query}",

importance=0.8

)

return {"messages": [response]}This architecture reduces token costs by up to 90% compared to sending full conversation history every call, according to 2025 memory layer benchmarks. Instead of reprocessing the same information repeatedly, the agent retrieves only what is needed for each query.

Memory Management Best Practices

Effective long-term memory requires more than simple storage. Production systems implement several critical patterns.

Memory decay prevents storage bloat by removing outdated information. Lifecycle policies automatically expire memories based on time or relevance thresholds, similar to how human forgetting helps maintain focus.

Consolidation mimics human cognitive processes by summarizing and organizing stored data. GraphRAG techniques structure memory content into knowledge graphs, enhancing recall by providing richer relationship context.

Filtering at write time excludes content types that should not persist, such as temporary instructions, test data, or sensitive information. This is essential for compliance and privacy requirements.

The Business Impact

Organizations implementing this three-layer architecture report significant improvements in agent performance and user satisfaction.

Retention of context across sessions increases task completion rates by 43% for complex multi-step workflows. Personalization based on accumulated user preferences drives 34% higher engagement metrics. Reduced token consumption from selective memory retrieval cuts operational costs by 60-90%.

More fundamentally, agents transition from stateless tools into intelligent systems that build genuine expertise over time. They stop making the same mistakes twice. They recognize returning users and adapt to their preferences. They accumulate organizational knowledge and apply it to new situations.

Future Directions

The convergence of MCP, persistent memory, and RAG represents more than an incremental improvement. It signals a fundamental shift in how we architect AI systems.

Google's adoption of MCP across Gemini models, Anthropic's native integration with Claude Code, and OpenAI's expanding ChatGPT tool ecosystem all point toward standardized, memory-enabled agents as the default architecture.

We anticipate several developments in the coming year. Bidirectional asynchronous MCP communication will support long-running processes and human-in-the-loop approvals. Formal governance structures including potential IETF or W3C standardization will ensure long-term protocol stability. Deeper integration between memory layers and reasoning frameworks will enable agents to not just recall facts but understand how their knowledge evolves.

The agents that remember will outperform the agents that forget. Every time.

Work With Versalence

We help small businesses navigate the transition from public AI to private, sovereign AI systems:

- AI Infrastructure Assessment — Evaluate systems and identify high-ROI opportunities

- Custom Deployment AI Services — Enterprise grade platform development and deployment

- RAG Implementation — Vector & Graph DB to elevate your AI's ability to provide precise an accurate results

📧 versalence.ai/contact.html | sales@versalence.ai