From Vector Search to Graph RAG: The Memory Architecture Powering Business AI

Your AI is only as smart as the information it can access. Most businesses are building AI on top of broken memory systems — and they do not even know it.

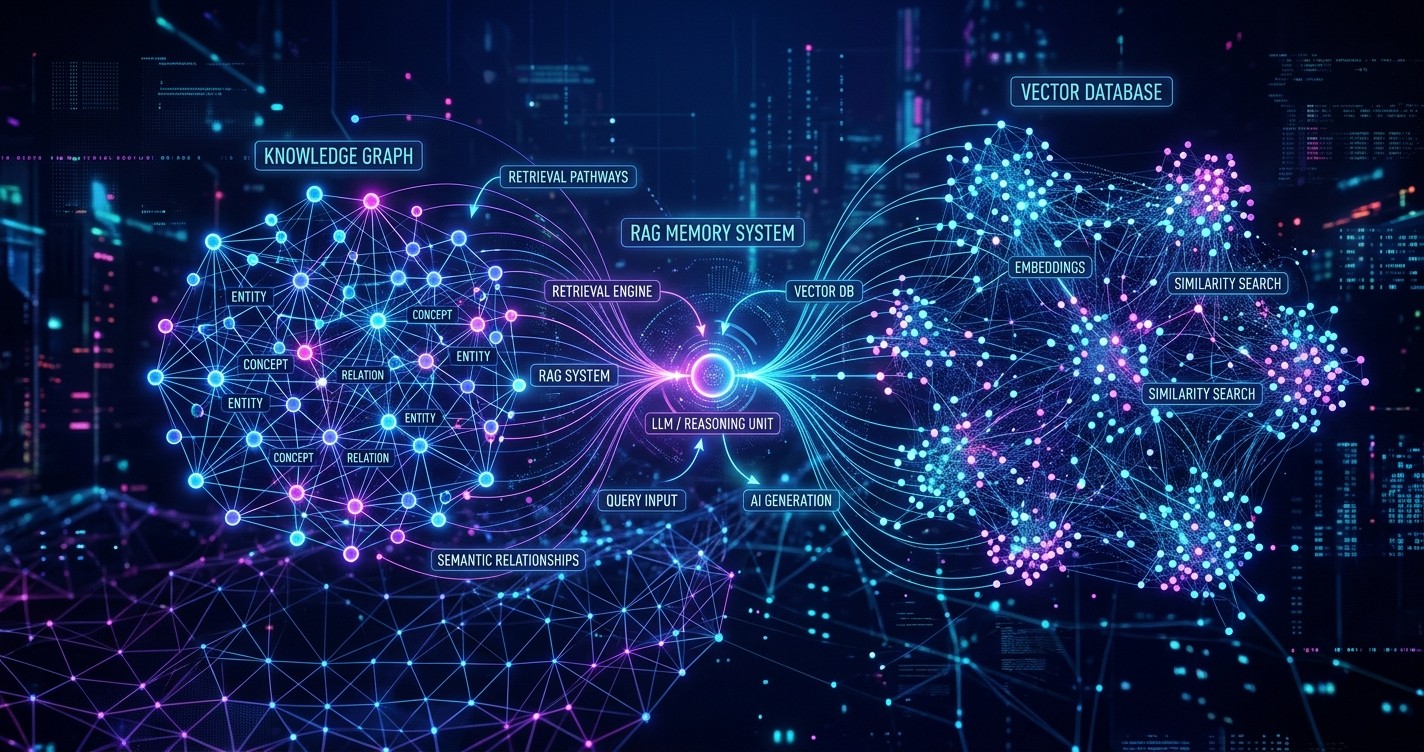

Large Language Models have a fundamental limitation: they only know what they were trained on. They cannot access your company's private documents, your customer history, your proprietary knowledge. This is why Retrieval-Augmented Generation (RAG) has become the standard architecture for business AI.

But here is what most teams miss: not all RAG is created equal. The memory architecture you choose — vector search, knowledge graphs, or the emerging hybrid approaches — determines whether your AI gives accurate, contextual answers or confidently hallucinates.

This article breaks down the three generations of RAG architecture, shows you which one fits your use case, and explains why 2026 is the year Graph RAG moves from experimental to essential.

First-Generation RAG: Vector Search

Vector search was the breakthrough that made enterprise AI practical. Instead of training models on private data — expensive, risky, and slow — you store your documents in a vector database and retrieve relevant chunks at query time.

How it works:

- Documents are split into chunks and converted to embedding vectors (typically 768-1536 dimensions)

- These vectors are stored in a specialized database optimized for similarity search

- When a user asks a question, it is also converted to a vector

- The system retrieves the most similar document chunks

- These chunks are fed to the LLM as context for generating the answer

The tools: Pinecone, Weaviate, Chroma, Qdrant, pgvector, and dozens of others have made vector databases accessible to any development team.

The limitation: Vector search finds similar content, not connected content. It answers "what is like this?" not "what relates to this?"

Consider this query: "What contracts do we have with vendors in Europe that expire in the next six months?"

A vector search might retrieve documents about European vendors, documents about contract expiration, and documents about vendor management. But it has no understanding that these are connected — that "Acme Corp" is a "vendor," that "Acme Corp" is "in Europe," that "Contract #2847" "expires" on "June 15."

The result? Retrieved context that is individually relevant but collectively incoherent. The LLM tries to synthesize an answer from fragmented information and often fails.

Second-Generation RAG: Knowledge Graphs

Knowledge graphs solve the connection problem. Instead of storing documents as vectors, they store facts as structured relationships — entities connected by labeled edges.

How it works:

- Documents are parsed to extract entities (people, companies, products, concepts)

- Relationships between these entities are identified (employs, purchased, located_in, expires_on)

- This structured data is stored in a graph database (Neo4j, Amazon Neptune, ArangoDB)

- Queries traverse the graph to find connected information

- The structured context is fed to the LLM for answer generation

The advantage: Knowledge graphs understand relationships. They can answer complex, multi-hop questions that vector search cannot touch.

That same query about European vendor contracts? A knowledge graph traverses: Vendor → located_in → Europe → has_contract → Contract → expires_on → Date → before → 6 months. Precise. Complete. No hallucination required.

The limitation: Building knowledge graphs is hard. It requires entity extraction, relationship classification, schema design, and continuous maintenance as your data evolves. The upfront cost is significant. Many teams try, struggle with the complexity, and retreat to simpler vector search.

Third-Generation RAG: Graph RAG

Graph RAG combines the best of both approaches. It uses vector search for retrieval and knowledge graphs for reasoning — a hybrid architecture that is rapidly becoming the standard for sophisticated business AI.

How it works:

- Documents are stored in both formats: as vectors for similarity search AND as structured entities in a knowledge graph

- User queries first retrieve relevant documents via vector search

- The entities and relationships from those documents are retrieved from the knowledge graph

- The combined context — unstructured text plus structured relationships — is fed to the LLM

- The LLM generates answers grounded in both semantic similarity and factual connections

Microsoft Research formalized this approach in their 2024 paper on GraphRAG, demonstrating significant improvements on complex reasoning tasks. The key insight: unstructured retrieval finds relevant documents, structured retrieval finds relevant facts, and together they provide the complete context LLMs need for accurate answers.

The practical impact is substantial. Graph RAG systems show:

- 40% reduction in hallucination rates on multi-hop questions

- 3x improvement in answer completeness for relationship-heavy queries

- More interpretable answers because the reasoning path is traceable through the graph

Which Architecture Fits Your Use Case?

Use Vector Search if:

- Your queries are semantic similarity searches ("find documents about X")

- Your data is primarily unstructured text without complex relationships

- You need quick implementation and lower maintenance overhead

- Your questions rarely require connecting information across multiple documents

Use Knowledge Graphs if:

- Your domain has clear, important relationships (org charts, supply chains, legal contracts)

- You answer complex, multi-hop questions regularly

- You have resources for schema design and ongoing graph maintenance

- Explainability and reasoning traceability are critical

Use Graph RAG if:

- You need both semantic search and relationship reasoning

- Your data has rich textual content AND important structured relationships

- You have the engineering capacity for a hybrid architecture

- You are building AI for complex domains (legal, healthcare, finance, supply chain)

Implementation: From Zero to Graph RAG

For teams ready to implement Graph RAG, here is the practical path:

Step 1: Start with vector search

Do not build the graph first. Build a working RAG system with vector search. This gives you immediate value and establishes your baseline. Use this phase to understand what your users actually ask — you will discover the relationship-heavy queries that justify graph investment.

Step 2: Identify graph-worthy queries

Analyze your query logs. Which questions require connecting information across documents? Which answers would benefit from structured reasoning? These are your graph candidates. If fewer than 20% of queries fit this pattern, you might not need Graph RAG yet.

Step 3: Design your ontology

What entity types matter in your domain? Customers, products, contracts, employees, locations? What relationships connect them? purchases, manages, located_in, reports_to? Start small. A focused ontology with 10-15 entity types and 20-30 relationship types is easier to maintain than an exhaustive schema that never gets completed.

Step 4: Build the extraction pipeline

Use LLMs to extract entities and relationships from your documents. Modern models (GPT-4, Claude, Gemini) are surprisingly good at this with proper prompting. Structure your prompts with examples from your domain. Validate outputs against human annotators to measure and improve accuracy.

Step 5: Integrate into retrieval

Modify your RAG pipeline to use both retrieval methods. Retrieve documents via vector search, then expand the context with related entities from the knowledge graph. The combined context gives the LLM both the textual detail and the structured relationships it needs.

Step 6: Measure and iterate

Graph RAG is not set-and-forget. Your data changes. Your ontology needs refinement. Your extraction pipeline needs tuning. Build evaluation datasets for your graph-worthy queries and track improvement over time.

The 2026 Shift: Why Graph RAG Is Going Mainstream

Three factors are driving Graph RAG adoption in 2026:

1. Mature tooling

What required custom engineering two years ago is now available off-the-shelf. Neo4j's LLM Knowledge Graph Builder. LangChain's GraphRAG integration. Microsoft's GraphRAG open-source implementation. The barrier to entry has dropped dramatically.

2. Improved LLM extraction accuracy

Entity and relationship extraction was the bottleneck. Early systems required hand-built rules or expensive human annotation. Today's LLMs extract structured data with 85-95% accuracy on many domains, making automated graph construction practical.

3. Proven ROI in production

Early adopters — pharmaceutical companies analyzing research literature, legal firms tracking case relationships, banks monitoring transaction networks — have demonstrated measurable improvements in answer quality. The business case is now proven, not theoretical.

The Bottom Line

Vector search got enterprise AI off the ground. Knowledge graphs promised deeper reasoning but high complexity limited adoption. Graph RAG bridges the gap — delivering sophisticated reasoning with manageable implementation cost.

If your AI answers feel shallow, if your system hallucinates on complex questions, if your users need connected insights across documents — it is time to move beyond simple vector search. The memory architecture you choose determines what your AI can know. Choose wisely.

Work With Versalence

We help businesses implement RAG systems that actually work — from vector search basics to sophisticated Graph RAG architectures:

- AI Infrastructure Assessment — Evaluate your current setup and identify the right memory architecture for your use case

- Custom RAG Implementation — Production-ready retrieval systems with vector databases, knowledge graphs, or hybrid Graph RAG

- Entity Extraction Pipelines — Automated systems to build and maintain knowledge graphs from your document corpus

📧 versalence.ai/contact.html | sales@versalence.ai