The Anti-Framework: Why Your AI Strategy Shouldn't Start With Technology

Every AI strategy workshop I've sat in starts the same way. Someone pulls up a slide with the logos. OpenAI. Anthropic. Google. The foundation models. Then the vector databases. Pinecone. Weaviate. Chroma. Then the frameworks. LangChain. LlamaIndex. Haystack.

This is backwards. It is exactly backwards. And it explains why most AI projects fail.

The Technology-First Trap

The technology-first approach feels logical. You need to know what's possible before you decide what to build, right?

Wrong. You need to know what matters before you decide what to build. The technology is just how you get there. Starting with the "how" before the "what" and "why" is how you end up with solutions looking for problems.

Here's what actually happens in technology-first planning:

The CTO reads about Graph RAG and decides the company needs a knowledge graph. Never mind that 90% of their queries are simple semantic searches that vector search handles fine. The graph becomes a six-month project that delivers marginal improvement for 10% of use cases while the other 90% still work fine with the old system.

Or the CEO sees a demo of a multi-agent system and decides the company needs "agent orchestration." Six months later they have a complex workflow where four agents hand off tasks to each other, except the original task was simple enough for one model to handle, and now they have four failure points instead of one.

Or the product team decides they need "real-time RAG" because they read it was better, except their documents change quarterly, and "real-time" just means they're burning compute rebuilding embeddings every hour for no benefit.

What Technology-First Misses

Starting with technology skips three questions that actually matter:

What is the decision this system needs to improve?

Not "what task can we automate" but "what decision do humans currently make poorly, slowly, or inconsistently?" If you can't name the decision, you don't have a use case. You have a technology looking for a home.

What is the cost of being wrong?

Some decisions can tolerate hallucination. Others can't. Customer-facing chat about return policies? Low cost of error, easy to correct. Medical diagnosis? High cost of error, needs human oversight. The technology choice follows from the error tolerance, not the other way around.

What is the cost of being slow?

Some decisions need to happen in milliseconds. Others can take hours. Fraud detection at checkout? Speed matters. Quarterly financial analysis? Speed doesn't matter as much as accuracy. The infrastructure requirements follow from the latency needs, not the other way around.

The Alternative: Decision-First Strategy

Here's how to plan an AI project without opening a single vendor website:

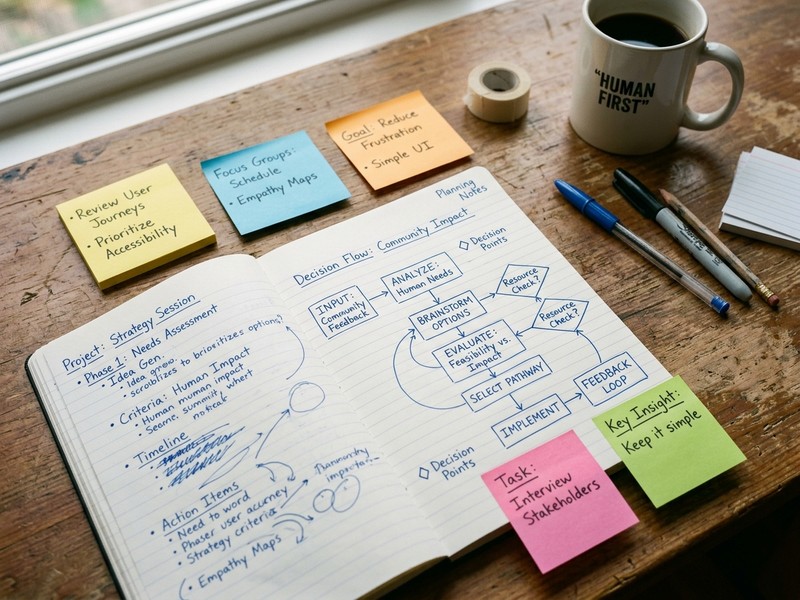

Step 1: Map the decisions

Walk through your organization and identify where humans make judgments. Which ones are slow? Which ones are inconsistent? Which ones require information scattered across systems? These are your candidate problems.

Step 2: Prioritize by leverage

Which decisions, if improved, would have the biggest impact? Not which ones are technically interesting. Not which ones would be cool demos. Which ones actually matter to the business?

Step 3: Define the error envelope

For each high-leverage decision, define how wrong the system can be and still be useful. This tells you whether you need a human in the loop, a confidence threshold, or a completely different approach.

Step 4: Define the latency envelope

How fast does this need to be? Real-time? Batch? Somewhere in between? This tells you your infrastructure constraints.

Step 5: Now look at technology

Only now do you open the vendor websites. And when you do, you're evaluating specific solutions against specific constraints, not browsing for inspiration.

A Real Example

A healthcare company I worked with started with technology. They wanted "AI for patient engagement." Six months of RFPs, pilot programs, and vendor demos. They ended up with a chatbot that 3% of patients used, mostly to ask questions that were already in the FAQ.

We started over with the decision-first approach.

What decisions matter? Clinicians deciding whether to schedule follow-up appointments based on patient risk scores. This was currently done by intuition, inconsistently, with some high-risk patients falling through cracks.

What is the cost of being wrong? High. Missing a high-risk patient means worse outcomes. But flagging too many low-risk patients means wasted resources. The system needed to be accurate, not just automated.

What is the cost of being slow? Low. This was a batch process, run overnight. No need for real-time infrastructure.

What technology? Simple classification model on structured data. No LLM. No RAG. No agents. Just a model that read patient records and flagged risk scores for clinician review. Deployed in six weeks. Used by 100% of clinicians. Reduced missed high-risk patients by 40%.

The technology was boring. The outcome wasn't.

Why This Is Hard

The technology-first approach is seductive because it feels like progress. You're "evaluating vendors." You're "doing research." You're "staying current."

The decision-first approach feels slow. You're asking questions without immediate answers. You're mapping processes instead of building demos. You're resisting the urge to open a vendor website.

But the technology-first approach just defers the hard work. You still have to figure out what you're building. You just figure it out after you've already committed to a technology stack, which means you build around the technology instead of the problem.

The Real Framework

There is no framework. That's the point.

Every business has different decisions that matter. Every decision has different error and latency constraints. Every constraint leads to different technology choices.

The "best practice" frameworks are just technology-first planning dressed up as strategy. They tell you what tools to use without asking what problems you have.

The real work is understanding your own decisions well enough to know what constraints matter. Everything else follows.

What To Do Instead

If you're planning an AI project right now, try this:

Don't open a single vendor website for two weeks. Don't read any product documentation. Don't watch any demos.

Instead, interview ten people in your organization who make decisions. Ask them what information they wish they had. Ask them what they do when they're uncertain. Ask them what slows them down.

Map those decisions. Prioritize by leverage. Define error and latency envelopes.

Then, and only then, look at technology.

You'll be amazed how much clearer the choices become when you know what you're actually trying to solve.

Work With Versalence

We help businesses build AI strategies that start with decisions, not technology. Decision mapping, constraint analysis, and technology selection based on what you actually need.

📧 versalence.ai/contact.html | sales@versalence.ai