Long Live MCP: Why the Model Context Protocol Is Facing an Evolution in 2026

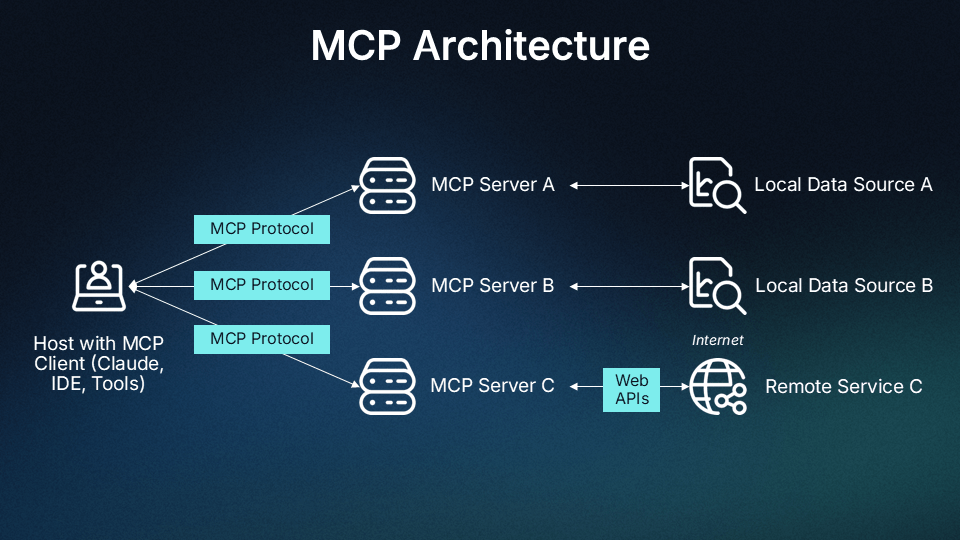

The Model Context Protocol (MCP) is an open-source standard developed by Anthropic in 2024 that allows AI models to seamlessly connect with external data sources, tools, and software systems. It acts as a universal, standardized connector—similar to a USB-C port for AI—enabling AI models to read files, query databases, and use tools without custom integrations for each service.

Key Features and Benefits of MCP:

Standardized Integration: Eliminates the "N×M" integration problem where every AI app needs a custom connector for every data source, simplifying it to a standard client-server model.

Context-Aware Action: Enables AI assistants to securely access local files, databases, or APIs to perform actions, not just generate text, thus supporting agentic AI.

Open Standard: Developed by Anthropic and open-sourced to become an industry standard for AI connectivity.

Secure & Flexible: Works locally (as a sub-process) or remotely (via servers), allowing developers to control what data the LLM can access.

How MCP Works:

Clients: The AI application (e.g., Claude, IDE) that needs data.

Servers: Specialized software that exposes tools (functions) or resources (data) via a JSON schema.

Connection: The client connects to the server using standard protocols (like stdio or SSE), allowing the AI to call tools dynamically.

MCP differs from RAG (Retrieval-Augmented Generation) in that RAG focuses on retrieving knowledge for generating better text, while MCP focuses on taking actions and interacting with tools.

The Problem:

The Model Context Protocol is consuming 40-50% of available context windows before agents perform any actual work. In March 2026, Perplexity CTO Denis Yarats announced his company is moving away from MCP toward traditional APIs and CLI tools. Y Combinator CEO Garry Tan built a CLI instead of using MCP. And a growing chorus of engineers report that MCP's promise of interoperability comes at a cost few anticipated: staggering token consumption, authentication friction, and reduced agent autonomy.

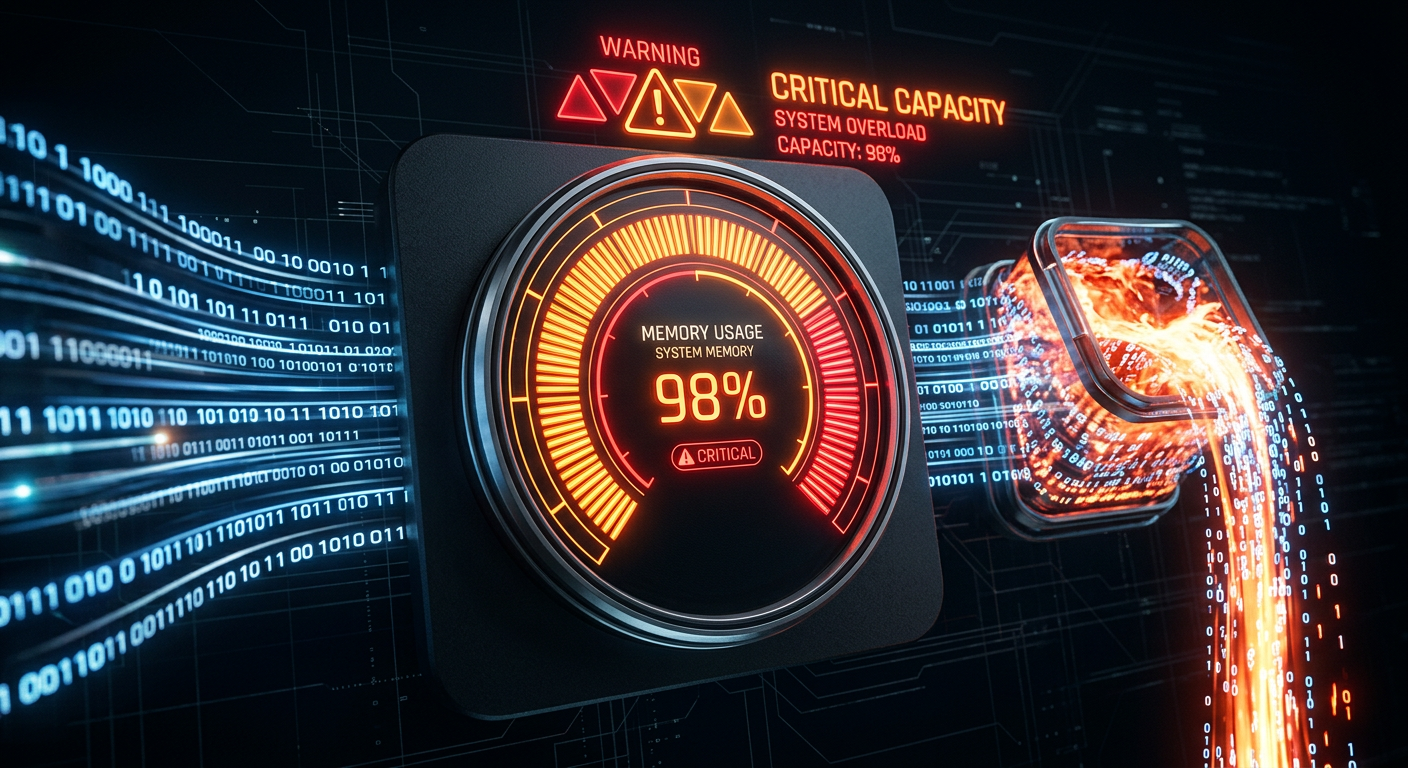

The Token Consumption Crisis

When Anthropic released MCP in late 2024, the pitch was compelling: a universal protocol enabling any AI agent to discover and use any tool through a standardized interface. Build a tool once, connect it anywhere. The protocol gained rapid adoption — Claude, Cursor, VS Code, Windsurf, and dozens of other applications added MCP support. Perplexity itself shipped an MCP Server in November 2025.

But as teams moved from experimentation to production, a critical problem emerged. MCP servers consume massive amounts of context window tokens just to initialize. When an AI agent connects to an MCP server, it receives comprehensive documentation about every available tool — names, descriptions, full JSON schemas, parameters, types, constraints. A typical MCP server contains 20-30 tools. Organizations rarely connect just one server; they integrate five, six, or more to provide diverse capabilities.

The Real-World Numbers

The token consumption is not theoretical. Engineers report concrete figures that explain why production teams are reconsidering MCP:

- 7 MCP servers active: 67,300 tokens consumed (33.7% of 200k context) before any conversation begins

- GitHub MCP server alone: Nearly 25% of Claude Sonnet's context window

- Full MCP setup (reported by one developer): 143K of 200K tokens (72% usage) with MCP tools consuming 82K tokens

- Tool metadata overhead: 40-50% of available context in typical deployments (Gil Feig, CTO of Merge)

Kevin Swiber, API strategist at Layered System, notes that "tool descriptions include too much data — it's token-wasteful and makes it harder for the LLM to choose the right tool." The result: agents spend increasing effort deciding what not to use, costs rise with larger prompts, accuracy drops when multiple tools overlap, and eventually sessions hit token limits and break.

The Perplexity Announcement: A Turning Point

At the Ask 2026 conference on March 11, Perplexity CTO Denis Yarats made the announcement that crystallized industry concerns. Perplexity — a company that had itself shipped an MCP Server just months earlier — is moving away from MCP internally. Yarats cited two core issues: high context window consumption and clunky authentication flows that create friction when connecting to multiple services.

The authentication problem is structural. MCP requires each server to handle its own auth flow. When agents connect to multiple services, this creates a fragmented authentication landscape that breaks across service boundaries. For production systems requiring consistent security postures, audit logging, and single sign-on, this is untenable.

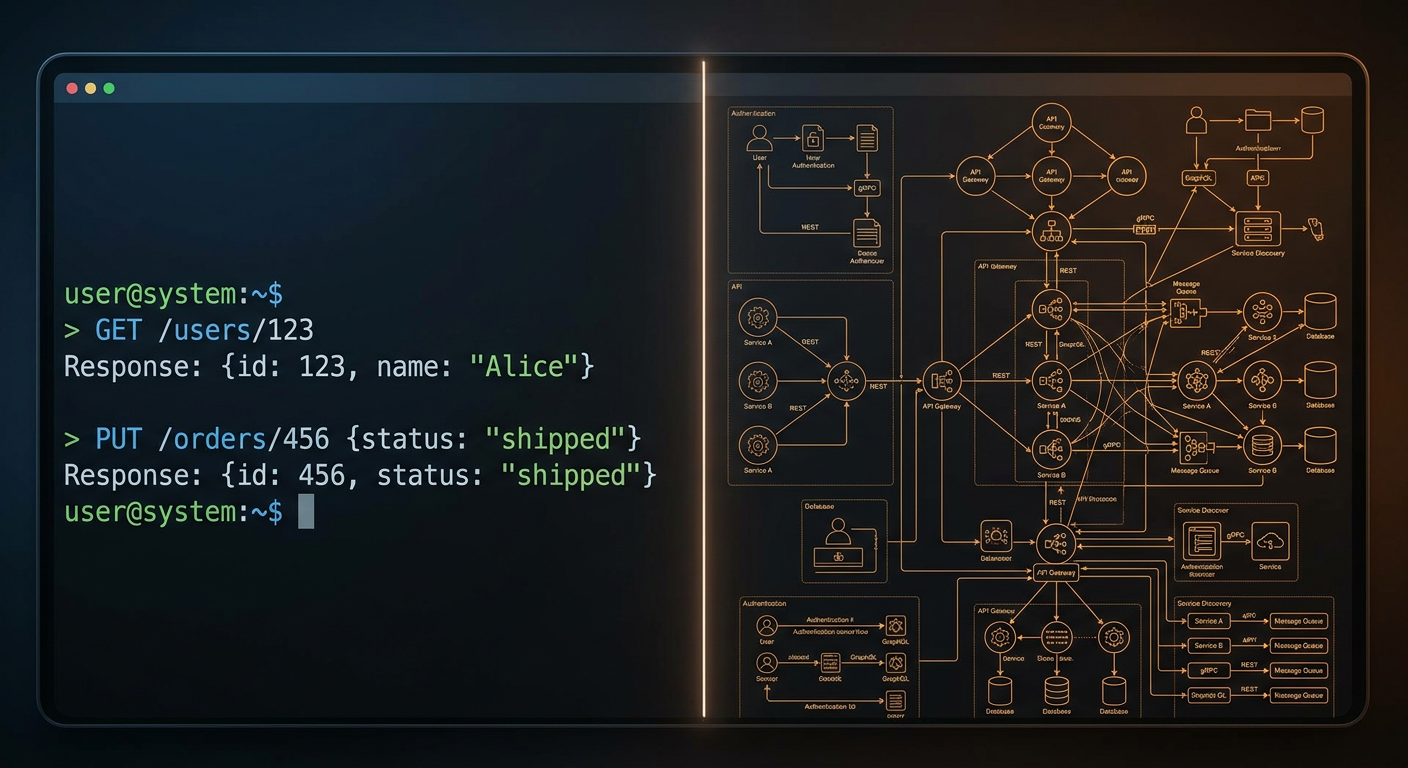

The Agent API Alternative

Perplexity's alternative is the Agent API, launched in February 2026. Instead of connecting to separate MCP servers for each capability, developers hit a single endpoint with one API key. The API handles tool execution internally rather than pushing complexity to the client.

| Feature | Details |

|---|---|

| Endpoint | POST https://api.perplexity.ai/v1/agent |

| Models | GPT-5.4, Claude Opus 4.6, Gemini 3.1 Pro, Grok 4.1 Fast, Nemotron 3 Super, Sonar |

| Built-in Tools | Web search ($0.005/call), URL fetch ($0.0005/call), function calling (free) |

| Compatibility | OpenAI SDK format — change base URL and key |

For teams already using the OpenAI SDK, switching requires changing two lines of code. The design is deliberately simple: no tool schemas in the system prompt, no separate auth per tool, no MCP server management.

The CLI Revolution: Why Top Engineers Are Building Direct

While Perplexity bets on APIs, another movement gains momentum: CLI-first approaches. Y Combinator CEO Garry Tan built a CLI for his use case rather than working through MCP, citing reliability and speed as deciding factors. This pattern appears across the developer ecosystem — when teams hit production requirements (uptime, latency, predictable costs), they tend to reach for CLIs over protocol-level abstractions.

The Performance Gap

One benchmark comparing CLI and MCP for browser automation found CLI completed tasks with 33% better token efficiency and a 77 vs. 60 point task completion score. The gap was largest in multi-step debugging workflows where the context budget ran out mid-task with MCP but not with CLI.

The CLI approach works because:

- Zero schema overhead: No tool definitions consuming context

- Direct execution: Agents call tools via shell commands they understand from training

- Selective context: Only relevant tools are referenced, not entire server schemas

- 4-5% context window savings reported by organizations switching to CLI-based approaches

As one engineer summarized: "A CLI works when you know exactly what tools you need. MCP works when the agent needs to figure that out at runtime. For production pipelines with known tool sets, direct APIs and CLIs are simpler, faster, and cheaper."

Dynamic Skills: The Documentation Alternative

Alongside CLI tools, "Skills" are emerging as a lightweight alternative to MCP. Where MCP servers provide real-time tool execution, Skills provide static documentation context that teaches AI assistants SDK patterns, API references, and best practices.

Christian Posta, VP at Solo.io, recommends using no more than 10-15 tools at a time to avoid context bloat. Skills address this by loading minimal context first and expanding only when necessary. Neeraj Abhyankar, VP of Data and AI at R Systems, notes that deduplicating schemas, scoping tools into namespaces, and caching metadata can cut token usage by 30-60%.

The most powerful setup often combines both approaches: Skills for foundational knowledge (SDK documentation, coding patterns, best practices) and MCP for dynamic operations (content creation, real-time queries, actions). This hybrid model gives teams consistency where they need it and flexibility where it matters.

The Code Execution Alternative

The third major alternative gaining traction is code execution — allowing agents to generate and execute code directly rather than working through predefined tool definitions. This approach has demonstrated token consumption reductions of up to 98% compared to traditional MCP implementations.

Instead of agents receiving descriptions of what tools do and trying to use them, they examine the code that implements the tool, understand exactly what it does, and call it directly. Agents can handle intermediate results intelligently — saving large documents to the file system and extracting only needed information rather than passing 50,000 tokens through the context window.

An agent that previously consumed 10,000 tokens just to initialize with MCP servers might now consume only 200 tokens with code execution, freeing context space for actual task execution and reasoning.

Security Considerations in the Post-MCP Landscape

Beyond token efficiency, security is driving the shift away from MCP. Enterprise organizations in regulated industries have significant concerns about data privacy. When using MCP with external model providers, all data flowing through the agent — including sensitive business information, customer data, and proprietary details — is transmitted to the model provider's infrastructure.

CLI and code execution approaches provide solutions through what security experts call "data harnesses." By implementing code execution in controlled environments, organizations can add layers that automatically anonymize or redact sensitive data before exposure to external model providers. This capability is particularly valuable for healthcare, finance, and legal organizations where data privacy is paramount.

The authentication model also differs significantly. MCP's distributed auth model creates multiple attack surfaces. CLI and API approaches centralize authentication, making it easier to implement consistent security policies, audit logging, and access controls across the entire agent ecosystem.

MCP's Defense: Why Standardization Still Matters

MCP defenders argue that critics miss the point. The protocol's value lies in dynamic tool discovery — agents that can find and use tools they weren't explicitly programmed to call. Progressive discovery, where agents explore available tools incrementally, is a capability that hardcoded API integrations cannot replicate.

For open-ended agent systems that need to adapt to new tools without code changes, MCP's standardized discovery layer solves a real problem. Teams still use MCP for rapid prototyping and simple integrations. Manufact, a Y Combinator startup, just raised $6.3 million to build open-source tools and cloud infrastructure for MCP, calling it the "USB-C for AI." Anthropic continues expanding MCP capabilities, recently adding shared context between Excel and PowerPoint add-ins.

The tension is not about which approach is better in absolute terms. It is about which tradeoffs matter for a given use case:

- MCP: Dynamic discovery, real-time data, complex multi-tool orchestration

- CLI/API: Known tool sets, speed, predictable costs, production reliability

The Future: Pragmatic Hybrid Architectures

Perplexity's move is pragmatic, not ideological. They still run an MCP Server for developers who want it. But their flagship product for agent builders is now a traditional API that absorbs complexity internally.

The bigger question is whether MCP's adoption momentum survives as more companies build API alternatives that ship faster with fewer integration headaches. The protocol has institutional support from Anthropic and broad IDE adoption, but developer tooling tends to converge on whatever is easiest to integrate.

The emerging consensus for 2026 is architectural flexibility:

- Start with Skills for documentation and patterns — zero maintenance, works offline

- Add CLI tools for local, fast operations where training data is sufficient

- Use APIs for managed runtimes and external service coordination

- Reserve MCP for scenarios requiring dynamic tool discovery and runtime adaptation

The Bottom Line

MCP is not dead. It is facing a "long live MCP-style" evolution where the protocol must address token consumption and authentication friction or be relegated to prototyping while production systems migrate to more efficient alternatives. The shift away from MCP by Perplexity, Y Combinator, and leading engineers represents a maturation of the AI agent development space.

The question is no longer whether to use MCP, but when. For rapid prototyping and dynamic discovery, MCP remains valuable. For production deployments where efficiency and autonomy matter, CLI tools, direct APIs, and code execution approaches are proving superior. The future belongs to teams that understand these tradeoffs and architect accordingly — not those that default to standardized approaches without examining actual requirements.

Work With Versalence

We help businesses architect AI systems that compound in value over time. Our expertise spans the full spectrum of AI agent architectures — from MCP integrations to CLI-first approaches, from API orchestration to code-execution systems. If you are navigating the shift from experimental MCP deployments to production-grade AI infrastructure, we can help:

- AI Infrastructure Assessment — Evaluate your current agent architecture and identify token optimization opportunities

- Custom Deployment — End-to-end setup of CLI-first, API-based, or hybrid agent systems tailored to your use cases

- MCP Optimization — Reduce context bloat through schema minimization, dynamic loading, and selective tool exposure

- Agent Architecture Migration — Transition from MCP to more efficient architectures without losing capabilities

- Training and Support — Empower your team to manage and extend AI agent capabilities

About Versalence

We help businesses architect AI systems that compound in value over time. If you are exploring stateful AI implementations, agent architectures, or MCP optimization strategies, let's talk.